COURSES

Doctorate in Business Administration

Doctorate in Business Administration (DBA)Doctor of Business Administration in Emerging TechnologiesDual Degree MBA and DBADoctor of Business Administration (DBA)Doctorate of Business Administration From ESGCI

Education

MBAMasters of Business Administration (MBA)Master of Business AdministrationMaster of Business AdministrationDual Degree MBA and DBAMaster of Business AdministrationMaster of Business Administration (MBA) Liverpool Business SchoolAdvanced General Management Program

Data Science and AnalyticsMaster's in Data Science - LJMUPost Graduate Programme in Data Science & AI (Executive) Post Graduate Certificate in Data Science & AI (Executive)Post Graduate Diploma in Data Science (E-Learning) Global Master Certificate in Business Analytics

Machine Learning and AIPost Graduate Certificate in Machine Learning & NLP (Executive)Post Graduate Certificate in Machine Learning & Deep Learning (Executive)PGC in Generative AI (E-Learning)Master of Science in Machine Learning & AIPost Graduate Programme in Machine Learning & AI (Executive)

ManagementLeadership and Management in New Age BusinessAdvanced Certificate in Digital Marketing and CommunicationProfessional Certificate in Global Business ManagementPost Graduate Diploma in Management (E-Learning)

Product and Project Management Post Graduate Certificate in Product ManagementProfessional Certificate Program in HR Management and Analytics Global Master Certificate in Integrated Supply Chain Management

Law

Study Abroad

Internships

Thanatology

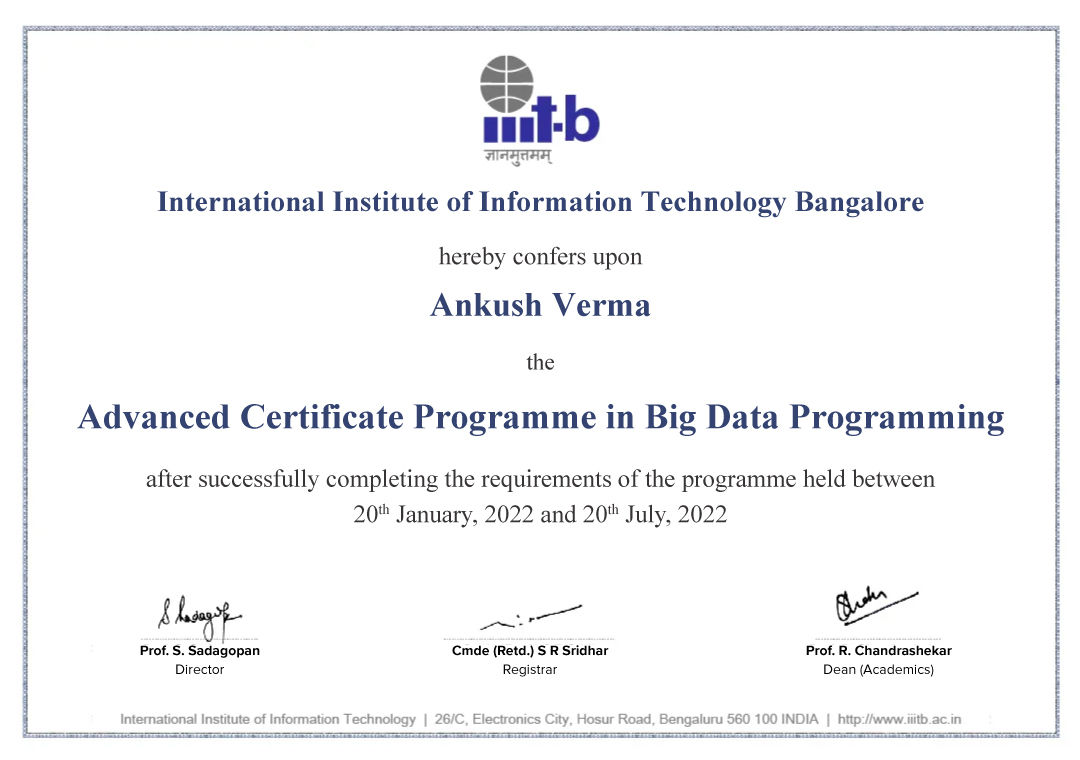

Advanced Certificate Program from IIIT Bangalore

Receive the prestigious Advanced Certificate & alumni status from IIIT Bangalore. Become a part of software development community.

Click to zoom

- Build a strong network for life with opportunities to connect to Big Data industry experts & your experienced fellow learners.

- Gain theoretical knowledge & practical understanding with this cutting-edge curriculum.

- Learn multiple tools & languages to stand apart to gain a foothold in the Big Data industry.

Our Learners Work At

Top companies from all around the world have recruited upGrad alumni

Instructors

Learn from India's leading Big Data faculty and industry leaders

Programming Languages, Tools, Libraries

Industry Projects

Learn through real-life industry projects, assignments & case studies

- Network with peers by collaborating on projects

- Guidance from an experienced industry mentor to achieve concrete learning outcomes

- Personalised subjective feedback on your submissions to help you improve

Data Processing using Spark

Use Spark to process a big data set

Learn More

ETL Data Pipeline

Make use of Sqoop, Redshift & Spark to design an ETL data pipeline.

Learn More

Real Time Data Processing

Build an end-to-end real-time data processing application using Spark Streaming and Kafka.

Learn More

Refer someone you know and receive Amazon.com vouchers worth 49 USD!*

*More details under the referral policy under Support Section.